QA - Professional Software Testing

Andrej P. M.

2020-10-06

15min

QA

The fact is that quality can’t simply be added to the finished product, it has to evolve simultaneously. Read further to find out how to make it happen.

Introduction

If you buy a car you can almost instantly evaluate its quality by looking at the color, shape or visible damage. But only when you start it up and take it on the road, you will be able to see if the car is any good. Even the most luxurious and sleek vehicle might break down in the middle of the road or have a rusty car frame underneath.

That same underlying logic applies to any product and includes software products. An application on a mobile phone might seem fine at first glance, but as soon as you scroll down, click on a button or try to use the most basic functionalities, it can start showing some flaws in business logic, design or even worse, bugs.

A single error in an eCommerce software can cost millions of dollars in revenue or even jeopardize someone's life in a self-driving car. This is the reason why quality assurance is so important when developing a new product.

To prevent this, the first effort in putting together the first professional testing group was made by computer science pioneer M. Weinberg who was at the time working with NASA on Project Mercury in 1958. Until then it was expected that people who work on the project don’t make mistakes or at least realize them in time, but unfortunately as we know humans make mistakes which can have dangerous consequences especially when you launch people to the moon.

Software quality

While to err is human, an effort to avoid mistakes has to be in place which will allow us to plan the project properly and minimize their impact and hopefully we will be able to avoid critical ones.

The concept of software quality was introduced to make sure the newly developed software is safe and functions as expected. In many places you can find it defined as: „the degree of conformance to explicit or implicit requirements and expectations“.

Explicit and implicit requirements correspond to the two basic levels of software quality which is functional and non-functional. In functional we have compliance with the requirements and design specifications. Also, in functional we focus on the point of view of the user:performance, features, ease of use, defects or lack off. Non-functional or implicit requirements correspond to the system inner architecture. Efficiency, understandability, security and code would fall into this category.

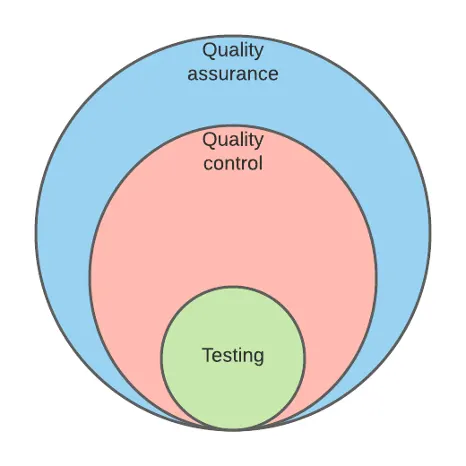

Managing the non-functional quality of a software needs a lot of know-how and usually up to the engineering team that is developing it as they know the inner works of it. Furthermore, functional aspects of the software can be assured with a set of quality management methodologies which include quality assurance, quality control and testing.

These terms are often used interchangeably but they refer to different aspects of software quality. While they do have a common goal, their approach differs.

Quality assurance can be defined as a continuous improvement and maintenance of the development process that enables the QC processes. In other words, it is focused on the organizational side of quality management, in which we monitor and organize the production process in the best possible way not only to save time and money but also to improve the product quality overall.

On the other hand, we have quality control in which we check the requirements and make sure that the finished product is compliant and that the errors on the manufacturing side are minimized or eliminated. The most basic activity to perform is the actual testing and the aim of that is to detect and solve any and all technical issues in the software code. In testing we can assess the product usability, security, performance and compatibility. Usually testing is performed by all sorts of engineers in the QA team, and the practice is that with the advent of agile methodologies it is done in the development process.

7 pillars of software testing

Exhaustive testing is not possible. It wouldn’t be economical to test all combinations of data inputs, scenarios and preconditions within an app. For example, for that exercise we would need to employ a computer simulation and have a great deal of knowledge in mathematics, specifically the fields of combinations and permutations as the number of combinations for an app with 10 inputs and 3 value options is around 59,000 test scenarios. In these cases, we focus on more probable scenarios, and don’t spend a lot of time on covering all of them as it is almost impossible and not so relevant outside academic circles.

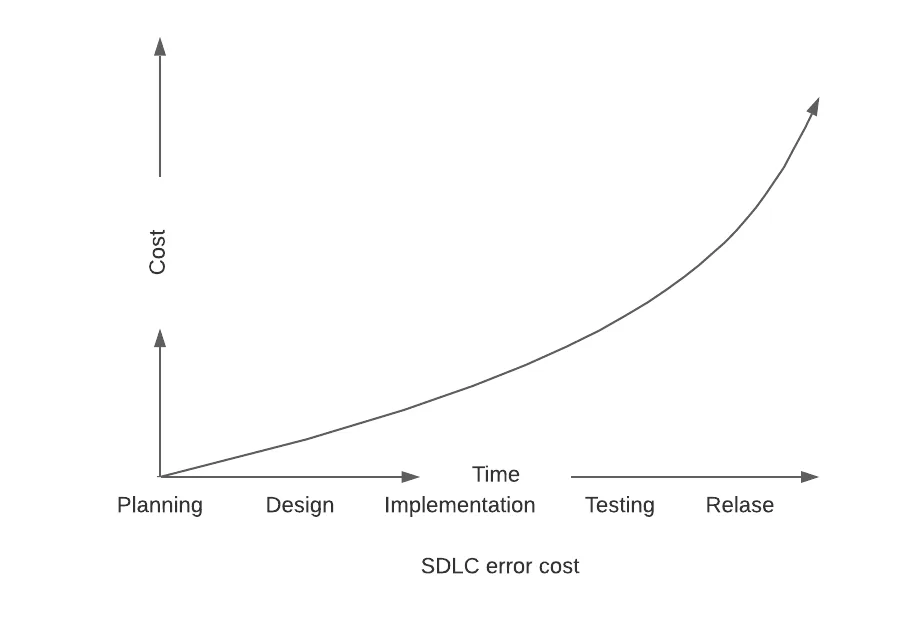

Early testing is crucial to software development life cycle. Any and all defects that are caught in the early phases of a project are much cheaper to rectify with less refactoring and backtracking.

Defect clustering or pareto principle (Colloquially known as 80/20 rule) states that 80% of all issues are found in 20% of the system modules. In other words, the existence of a few issues in one module or feature that are standing out from the rest of the testing indicates a much higher possibility of more issues in the same module.

Pesticide paradox is an interesting name to a problem found in all walks of life. Repetitive use of a pesticide will with time develop a resistance to it in insects thus making it ineffective. We can apply the same logic to software testing. Therefore, repetitive testing will be ineffective for discovering new issues and defects. That being said, engineers have to be on a constant look out for new ways to approach an old issue.

Context dependent testing is when you do your work smart and focus on what really matters in the context of your specific niche or industry. For instance, the whole approach, strategies, methodologies and types of testing will be different for testing a website versus testing a lifesaving medical machine. You might go for speed and usability in a website, trading performance in other areas, but will not do so in a medical machine.

Testing shows a presence of defects. We can never be 100% sure there are no defects, but we can use testing to reduce the number of unfound issues and reduce the risk in production and give us some metrics to work with in planning.

Absence of error (logical) fallacy talks about the success of a product in correlation with the absence of defects. It’s completely wrong because the product might not be meeting the business needs and requirements at all and have a 99% bug free record. This can arise if the system is tested for the wrong requirements and this brings us back to early testing in which these baby issues are usually resolved.

Paradigm shift

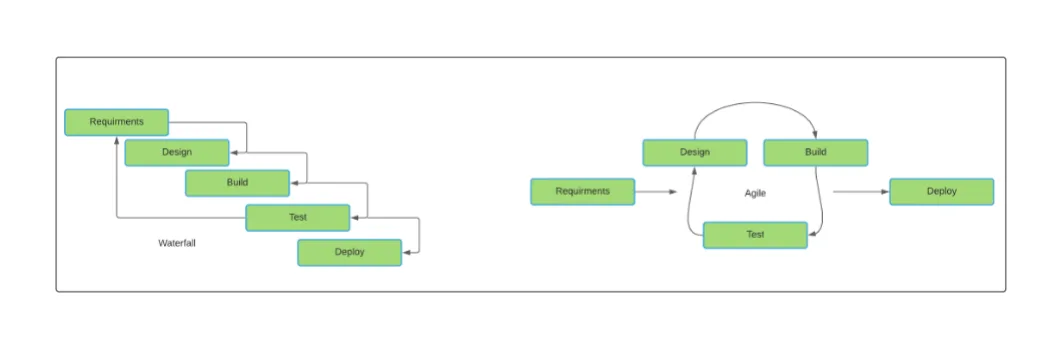

Waterfall and Agile methodologies play a big role in quality management, as they affect the overall way in which the qa efforts are being driven. The waterfall methodology is an old industrial era way of producing in which testing comes at the end of the software development life cycle. Moreover, the software is by then already designed and coded and in practice defects detected in this stage can be too expensive to fix as the cost of the defects keep spiraling exponentially nearing the end of the software development process. To put it in other words, if a defect is detected at the design stage, you will need to rework your design to fix it. But if some issues remain hidden until the product is built then you might need some major refactoring efforts on the side of the code and also on the side of the design which will suck your project dry. That some logic applies to features with flawed logic as building upon them will only add more salt to the wound.

On the other hand, Agile breaks down the development process into smaller modules, iterations or as we would call them sprints. This puts the QA engineer on par with his pear that is developing. It does ask a lot from the engineers that are involved in terms of communication skills and technical know-how but offers the process a much-needed breath of fresh air. This process allows the qa engineer to work in parallel with the team that is developing the software and doing it this way will allow the defects, bugs or flaws to be rectified immediately after they occur. This is significantly cheaper and requires less work from the team. Moreover, with agile iterative methodology we can have a realistic estimate for the time needed for the project. This testing approach is all about the best practices in QA and during the whole process the QA procedures are being built up and adjusted as the project needs change or gets to a different phase. As the studies have shown agile scrum teams have the best results in the software development process.

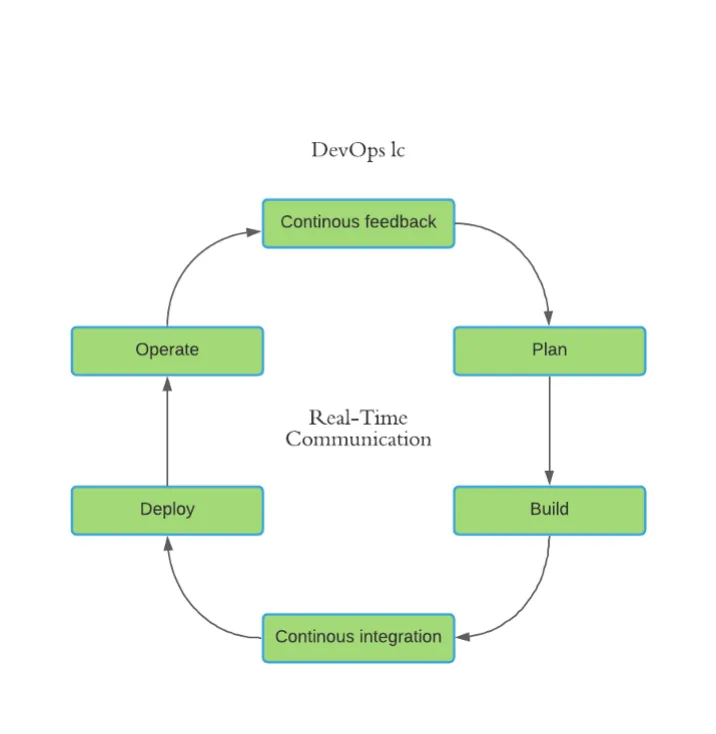

DevOps - QA

Might sound like two sci-fi civilizations at war with each other but I assure (get it-get it) you these two positions are very similar. As those of us working in Agile know very well DevOps is an extension of QA which bridges the gap from development to management or operations. DevOps as a term includes a concept known as continuous development and integration (CI/CD).

It also places emphasis on automating and continuously integrating tools that will speed up the development process and give it some velocity. Thus, you have velocity prediction usually on 3-4 sprints from which you can build upon. To fill in this position it is expected from an engineer to excel at almost any technical or humanistic skill so I like to think of it like being a part of the special force that is designed to tackle the biggest issues on a project. DevOps positions are predicted to grow as the Agile methodology finds more and more acceptance.

Test plans and cases

The first thing to do when making test plans and test cases is to prepare yourself by getting to know the software you are working on. On the other hand, it is also important to know the mindset of the users that will be using it as well as it’s business logic. The first step would be to make a test plan strategy as a high level document that will be referring to the project as a whole. Under the test plan we have test cases which are separate smaller documents which cover each testing phase, and they can be updated by the QA team as the project progresses. Writing test plans and test cases is very time consuming. To tackle this issue there were advancements made in this area by James Whittaker who is doing some groundbreaking work in showing the world that it really works. He introduced the 10-minute test plan concept in which 80 percent of the planning can be done in 30 minutes or less.

Link to the James Whittaker google testing blog.

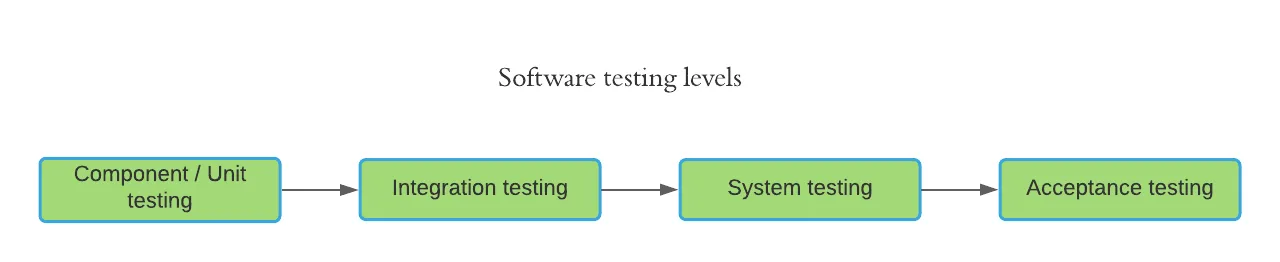

Software testing levels

Software is a complex system that interacts with a lot of separate components including third party integrations. Therefore, to be efficient in software testing we need to go far beyond our own source code and anticipate how it will react with other known software we plan to work with. In testing we go through the software levels listed below.

Unit test – smallest part of the software we can test to see if it meets the design and requirements.

Integration test – Checking if the units work together properly as a group and interact without any unexpected issues. Here we have bottom up and bottom down testing which only points to the level of complexity we want to start with.

System testing – In this part we get to test the whole system where we validate it’s compliance with the technical and functional requirements and our quality standards set at the beginning of the project.

User acceptance testing – In this last stage of the product we can do our validation and see if it is accurate and also we can check the user requirements. After that it is on the team to decide if the software is ready to be deployed or not.

Software testing methods

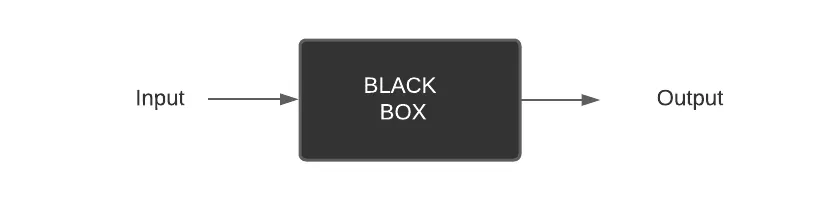

The famous black box testing gets its name from the fact that the QA engineer doesn’t know how the application works internally and focuses only on input and outputs. This method is used primarily for checking the functionality of the software and that it meets the end user demands. It is usually used for system and user acceptance testing.

White box testing is where the developers are usually involved as it involves a deep knowledge of the code and testing of the structural parts of the software. Usually it is done to enhance security and improve design and usability and it is done on the integration and unit levels.

Gray box testing combines the two previous methods and using this method an experienced engineer can have an easy time in resolving defects using his knowledge of the internal workings of the system but still looking in with the black box perspective.

The last but not least is Ad hoc testing. This method is usually done without planning and documentation. It can be done at any time and is usually improvised. Defects are more difficult to reproduce using it but it does give quite good results, and more importantly, it gives them fast.

Types of software testing

We can have different types of testing which is dependent on the objective of the whole process. We will have a look at the most important ones according to the ISTQB survey.

Functional testing is arguably the most important testing type in which we test the software against the functional requirements by giving it an input and examining the output. Therefore, as you can see, it would be the black-box approach to testing it. Without the functions there is no use in testing all other non-functional elements of the software.

Performance testing is without a doubt one of the most important non-functional testing types. In it we are investigating the stability of the system under stress or workload. There are different kinds of performance testing but they are all based on giving the system more workload then expected and trying to break it. Of course in a reasonable manner. There needs to be a breathing area but too much of it will eventually just drain the company of it’s money without a valid reason which will probably have a backlash on the QA engineer in charge so don’t go crazy with the numbers.

Use case testing is a widely used technique which allows us to put ourselves in a scenario like the end user. It’s a user oriented way of testing and writing tests. It is very useful every step of the way as it allows us to keep a balance between what the user wants and what the business and engineering wants.

Exploratory testing is one of my favorites as it allows the engineer individuality by testing the software with the ad hoc method as he pleases. The emphasis here is to validate the experience not the code. This approach usually provides valuable results.

In the end we have the usability testing. This test is performed from the end user approach to see if it is easy to use. In it we check if the implementation is suitable for the users.

Regression testing is the last approach to testing I left because it is the first one that a new QA engineer will come into contact with. In regression testing after a deployment or an update we retake all of the tests regarding a specific area that was updated or a better practice would be to do a full regression in which we will make sure that the interactions between the old and the new didn’t jeopardize the functionality or the stability of any part of the software. These two approaches may vary hugely as with some projects there is just not enough time to do a full regression or the project itself is too big.

In conclusion

Quality assurance can bring a lot to the table in terms of enhancing the production cycles and avoiding the usual downfalls. It gives value immediately because of the sheer amount of knowledge the QA team has learnt about the product during the process, which can be used in other areas of software development life cycle as well like additional services. It brings down the price of the product as it saves time by discovering and rectifying defects on time and improves the team’s velocity when working on it’s development. Moreover, it has become an essential part of any development team working with agile methodology as it was envisioned. The future also seems bright as there has been a lot of interest about security testing , especially in the cloud environment. In that environment we would be talking about white box testing methodologies as the industry is going into the direction of well versed engineers, as we would call them jacks of all trades. Some would say that’s an insult, but wait until they see how much more benefits they have that a specialized developer doesn’t. Furthermore, we should see a rise in ML based algorithms which will allow us to focus more on the organization and planning, as they will be doing tasks once reserved only for humans. This is true for test automation also, and it is expected for us to be able to take even more work per person with the help of these tools. On the other hand we have big data also booming. Compliance is one of the most painful areas we can talk about when we mention big data and a lot of work needs to be put in to make sure it is secured as the data protection is getting tighter across the world. As you can tell already QA is on the rise and it has a big impact on a company and the final product success.

Subscribe to our newsletter

We send bi-weekly blogs on design, technology and business topics.